[ad_1]

In a latest LinkedIn submit, Gary Illyes, Analyst at Google, highlights lesser-known facets of the robots.txt file because it marks its thirtieth yr.

The robots.txt file, an internet crawling and indexing part, has been a mainstay of search engine optimization practices since its inception.

Right here’s one of many explanation why it stays helpful.

Strong Error Dealing with

Illyes emphasised the file’s resilience to errors.

“robots.txt is just about error free,” Illyes stated.

In his submit, he defined that robots.txt parsers are designed to ignore most mistakes with out compromising performance.

This implies the file will proceed working even when you by accident embody unrelated content material or misspell directives.

He elaborated that parsers usually acknowledge and course of key directives resembling user-agent, permit, and disallow whereas overlooking unrecognized content material.

Sudden Characteristic: Line Instructions

Illyes identified the presence of line feedback in robots.txt information, a characteristic he discovered puzzling given the file’s error-tolerant nature.

He invited the search engine optimization neighborhood to take a position on the explanations behind this inclusion.

Responses To Illyes’ Submit

The search engine optimization neighborhood’s response to Illyes’ submit offers further context on the sensible implications of robots.txt’s error tolerance and using line feedback.

Andrew C., Founding father of Optimisey, highlighted the utility of line feedback for inner communication, stating:

“When engaged on web sites you possibly can see a line remark as a be aware from the Dev about what they need that ‘disallow’ line within the file to do.”

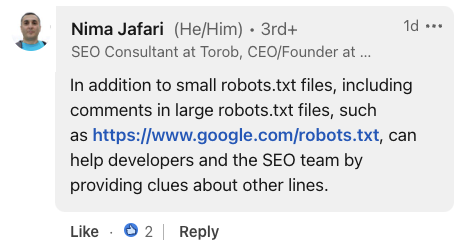

Nima Jafari, an search engine optimization Advisor, emphasised the worth of feedback in large-scale implementations.

He famous that for in depth robots.txt information, feedback can “assist builders and the search engine optimization workforce by offering clues about different strains.”

Screenshot from LinkedIn, July 2024.

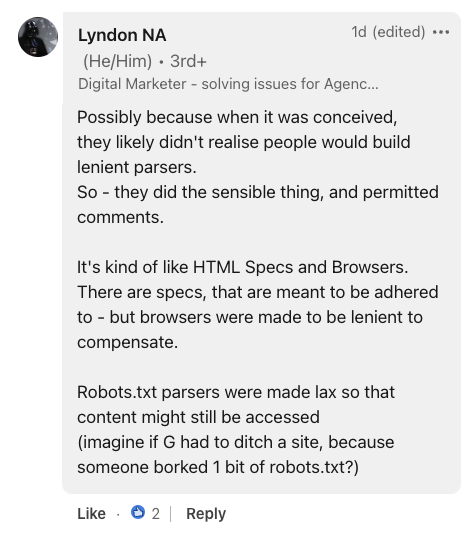

Screenshot from LinkedIn, July 2024.Offering historic context, Lyndon NA, a digital marketer, in contrast robots.txt to HTML specs and browsers.

He recommended that the file’s error tolerance was doubtless an intentional design selection, stating:

“Robots.txt parsers have been made lax in order that content material would possibly nonetheless be accessed (think about if G needed to ditch a website, as a result of somebody borked 1 little bit of robots.txt?).”

Screenshot from LinkedIn, July 2024.

Screenshot from LinkedIn, July 2024.Why SEJ Cares

Understanding the nuances of the robots.txt file may also help you optimize websites higher.

Whereas the file’s error-tolerant nature is mostly useful, it may probably result in ignored points if not managed fastidiously.

What To Do With This Data

- Evaluation your robots.txt file: Guarantee it accommodates solely needed directives and is free from potential errors or misconfigurations.

- Be cautious with spelling: Whereas parsers might ignore misspellings, this might lead to unintended crawling behaviors.

- Leverage line feedback: Feedback can be utilized to doc your robots.txt file for future reference.

Featured Picture: sutadism/Shutterstock

[ad_2]

Source link